Presto on ARM – Chunxu Tang & Jiaming Mai, Alluxio

Traditionally, the deployment of Presto has been limited to Intel processors with the x86 architecture. However, with the growing popularity of ARM architecture, Chunxu and Jiaming have extended the Presto ecosystem to ARM and conducted a series of benchmark experiments. Their objective is to evaluate the performance of Presto on ARM architecture and identify key insights from the experiments. In this presentation, Chunxu and Jiaming will share the results of their performance evaluation and discuss some of the most significant findings from their research.

Speeding Up Presto in ByteDance – Shengxuan Liu, Bytedance & Beinan Wang, Alluxio

Shengxuan Liu from ByteDance and Beinan Wang from Alluxio will present the practical problems and interesting findings during the launch of Presto Router and Alluxio Local Cache. Their talk covers how ByteDance’s Presto team implements the cache invalidation and dashboard for Alluxio’s Local Cache. Shengxuan will also share his experience using a customized cache strategy to improve the cache efficiency and system reliability.

Scaling Cache for Presto Iceberg Connector – Beinan Wang, Alluxio & Chunxu Tang

While using the Presto Iceberg connector, the in-heap cache in Presto is likely overloaded. In this talk, Beinan and Chunxu will share the design, implementation, and optimization of the off-heap cache to address the scalability challenges. You will learn how to cache Iceberg data and metadata for the Presto Iceberg connector, followed by future work on improving table scans using Apache Arrow.

Architecting Your Data Platform with Presto and Alluxio in Heterogeneous Environment – Adit Madan

As the cloud is evolving and the adoption of a hybrid-cloud or multi-cloud approach grows, the data architecture must adapt to heterogeneous environments. In this talk, Adit Madan shares insights on how to architect a data platform with Presto and Alluxio that provides agility and simplicity to your data team.

Dynamic UDF Framework and its Applications – Rongrong Zhong, Alluxio & Yanbing Zhang, Bytedance

Presto supports dynamically registered User Defined Functions (UDFs) since 2020. Over the years, we used this framework to add support for SQL UDFs and remote / external UDFs. One common community request in the UDF domain is to support Hive UDFs. Many companies have legacy Hive pipelines, and engineers who are familiar with HQL and Hive UDFs. With remote UDF, one can implement Hive UDF support as UDFs running on the remote cluster. But since HiveUDFs are written in Java, we can also run them inside the engine. We extended the dynamic UDF framework to support Java UDFs, and used this new extension to add HiveUDF support in Presto. With this feature, users can directly use their familiar HiveUDFs and UDAFs in their Presto query.

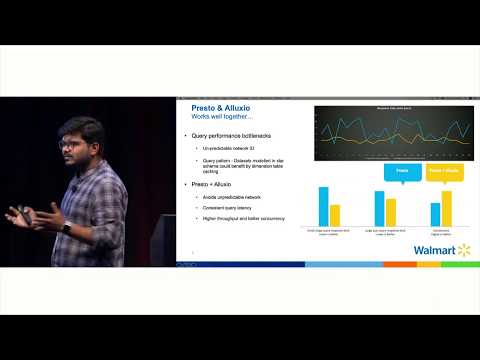

Speed Up Presto at Uber with Alluxio Caching – Chen Liang, Uber & Beinan Wang, Alluxio

At Uber, Presto is heavily used as one of the primary data analytics tools, and Presto’s query performance has profound production impact at Uber. As part of the Presto optimization effort, we turned to explore Alluxio as a caching solution. Alluxio is an open source data orchestration platform often used by many compute frameworks as the caching layer. Alluxio caching is currently enabled on ~2000 nodes across 6 clusters at Uber. In this presentation, we will talk about our journey at Uber of integrating Alluxio cache into Presto. We will discuss the Uber specific challenges we encountered and how we addressed them. We will also present the performance improvements we have seen. Besides, we will also discuss our plan and next steps, and potential future collaboration opportunities with the community.

Self-serve Data Architecture Evolution with Presto and Alluxio Across Clouds – Adit Madan, Alluxio

In this presentation, Adit Madan shares insights to help architect a data platform ready to minimize the impact of change and evolution. He will co-relate industry trends for a multi-tenant environment with how the Presto & Alluxio stack drives agility for hundreds of users in the cloud, across multiple datacenters and a hybrid cloud.

After RaptorX: Improve Performance Understanding and Workload Analysis in Presto – Ke Wang & Bin Fan

RaptorX, an umbrella project presented in PrestoCon Day in March, enabled the Presto interactive fleet in Facebook to reduce latency by 10x, based on a set of architectural improvements and optimizations with hierarchical caching. This presentation provides an update on the follow-up enhancement. Bin Fan from Alluxio will talk about the exploration of a probabilistic algorithm in Alluxio caching to estimate cache working set and the implementation of shadow cache Ke Wang from Facebook will talk about how shadow cache is used to understand the system bottleneck for better resource allocation and query routing decisions. She will also cover a recent improvement in collecting and aggregating per-query runtime statistics on the Presto engine to better understand the time breakdown, resource usage breakdown and cache hit rate on a per-query basis, which can help identify areas of improvement.

A Tour of Presto Iceberg Connector – Beinan Wang, Alluxio & Chunxu Tang, Twitter

Apache Iceberg is an open table format for huge analytic datasets. The Presto Iceberg connector consolidates the SQL engine and the table format, to empower high-performant data analytics. Here, Beinan and Chunxu would like to discuss and share the architectural design of the Presto Iceberg connector, advanced Iceberg feature support (such as native iceberg connector, row-level deletion, and iceberg v2 support), and the future roadmap.